The Ethics of Memory in the Age of Total Surveillance

Think about this.

A post you wrote ten years ago.

A photo you forgot existed.

A mistake you believed had quietly faded with time.

Now imagine all of it—still there.

Searchable. Traceable. Permanent.

In today’s digital world, forgetting is no longer natural.

Digital systems record, store, and retrieve everything at any moment.

As a result, we are left with a difficult question:

If nothing is ever truly forgotten…

Is forgetting a moral failure—or a fundamental human right?

1. A Society Without Forgetting Is a Society Without Forgiveness

The Permanence of Mistakes

In a world of permanent records, mistakes do not disappear.

A careless tweet from adolescence.

An impulsive decision.

A moment of poor judgment.

These fragments can follow a person for decades.

For example, employers search digital histories.

Public figures are judged by their past statements.

Even ordinary individuals live with the fear of being remembered too well.

The Disappearance of Forgiveness

However, human beings are not static.

We grow.

Then we change.

And we learn.

This leads to a deeper question.

If the past is never allowed to fade,

what happens to forgiveness?

A society that never forgets

may slowly become a society that cannot forgive.

2. Memory Is Technology—Forgetting Is Humanity

Memory as Data Storage

Memory is becoming increasingly mechanical.

Cloud storage, surveillance systems, blockchain records,

and even experimental neuro-memory technologies

are pushing us toward perfect preservation.

Digital systems record everything.

Anyone can retrieve everything.

Forgetting as a Human Process

However, forgetting is not simply loss.

It is:

- emotional release

- space for reflection

- the beginning of healing

In other words, we do not only grow by remembering.

We also grow by letting go.

If memory is accumulation,

then forgetting is transformation.

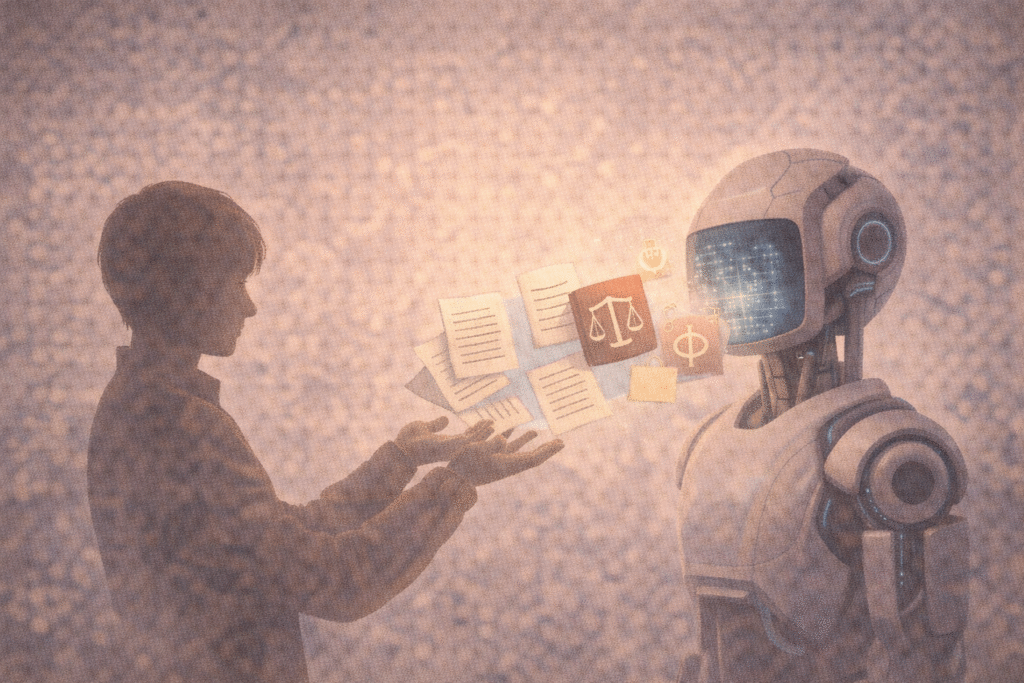

3. The Right to Be Forgotten

Legal Recognition

In 2014, the European Union recognized the “right to be forgotten.”

This allows individuals to request the removal of personal data

from search engines and online platforms under certain conditions.

Ethical Meaning

More importantly, this is more than a legal tool.

It reflects a deeper belief.

That human beings are not fixed.

That identity can evolve.

And that dignity includes the ability

to move forward without being permanently defined by the past.

Therefore, we must ask:

Is forgetting an escape from responsibility—

or a necessary condition for personal renewal?

4. Why We Must Be Able to Forget

Memory as Selection

Life is not about storing everything.

It is about choosing what to carry.

What we remember shapes who we become.

At the same time, what we forget also shapes who we are allowed to be.

The Danger of Endless Memory

Without forgetting:

- apologies lose meaning

- growth becomes invisible

- identity becomes frozen

As a result, we are slowly being conditioned

to treat forgetting as a flaw.

However, the real danger may be the opposite.

Not forgetting enough.

More importantly, we must reconsider what it means to be human.

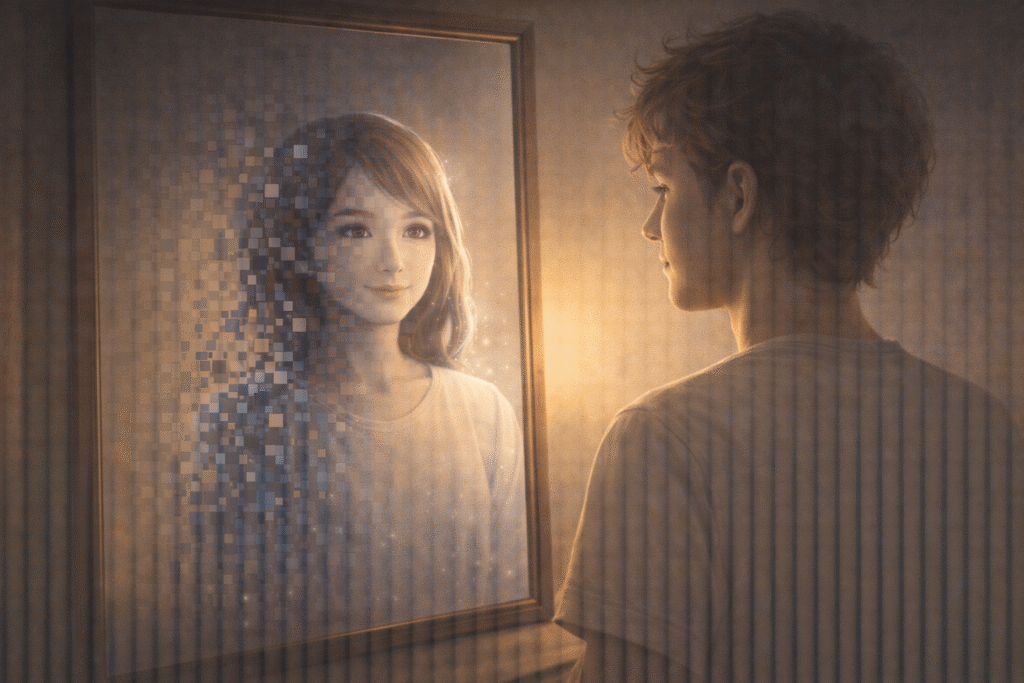

Conclusion: Forgetting as the Last Human Skill

Machines can remember everything.

But they cannot forget in the human sense.

Because forgetting is not computation.

It is shaped by:

- pain

- love

- time

- healing

In a world where everything can be recorded,

we must decide what should remain—and what should fade.

And ultimately, we are left with one final question:

If nothing about your past could ever disappear—

would you still be free to become someone new?

Reader Question

If nothing about your past could ever be erased—

Would you still feel free to become someone new?

Related Reading

If nothing is ever truly forgotten in the digital world, can any version of truth remain fixed—or are all records simply interpretations preserved over time?

In Is There a Single Historical Truth, or Many Narratives?, we explore how truth is shaped by perspective, power, and interpretation—raising a deeper question about whether permanent records reveal reality, or merely freeze one version of it.

If memory can be stored, analyzed, and even predicted by machines, what does that mean for human identity—and the possibility of change?

In If AI Could Dream, Would It Be Imagination—or Calculation?, we examine whether artificial intelligence can move beyond data processing toward something like imagination—and how this challenges the boundaries between memory, consciousness, and what it means to be human.

References

1. Viktor Mayer-Schönberger (2009). Delete: The Virtue of Forgetting in the Digital Age.

This book argues that permanent digital memory threatens human autonomy and social forgiveness, emphasizing why forgetting is not a weakness but a necessary condition for a humane society.

2. Daniel J. Solove (2007). The Future of Reputation.

Solove examines how online records can damage personal identity and reputation, showing how the inability to escape past information reshapes social judgment.

3. Yinghui Lu (2020). “Digital Forgetting and the Right to be Forgotten.”

This work reframes forgetting as a matter of dignity and ethical restoration rather than mere data deletion, supporting the philosophical foundation of the right to be forgotten.

4. Jeffrey Baron (2018). “The Right to be Forgotten.”

Baron analyzes the legal tension between privacy and freedom of expression, highlighting the complexity of regulating memory in democratic societies.

5. Paul Ricoeur (2004). Memory, History, Forgetting.

Ricoeur presents forgetting as an essential part of how memory itself is structured, offering deep philosophical insight into why forgetting is central to human identity.