The Debate Between Linguistic Relativity and Universal Grammar

Every day, we think, speak, and interpret the world through language.

But have you ever wondered—does the language you speak shape how you think?

Or does your mind already possess a structure that simply finds expression through language?

This question lies at the heart of one of the most enduring debates in linguistics, philosophy, and cognitive science. From the Sapir–Whorf hypothesis to Chomsky’s theory of universal grammar, scholars have long struggled to determine which comes first: language or thought.

1. Does Language Shape Thought? — The Sapir–Whorf Hypothesis

The Sapir–Whorf hypothesis, also known as linguistic relativity, argues that the structure of a language influences how its speakers perceive and understand the world.

Edward Sapir and Benjamin Lee Whorf proposed that language is not merely a tool for communication but a framework that actively shapes cognition.

For instance, some languages contain dozens of words to describe different types of snow, while others use only one. This linguistic richness may lead speakers to notice and differentiate subtle variations that others might overlook.

Whorf’s analysis of the Hopi language further suggested that speakers perceive time not as a linear flow, but as cyclical or event-based. Such findings imply that language can fundamentally influence how reality itself is experienced.

From this perspective, language acts as a “map of thought,” guiding perception, attention, and interpretation.

2. Does Thought Shape Language? — The Theory of Universal Grammar

In contrast, Noam Chomsky’s theory of universal grammar argues that language is shaped by innate cognitive structures.

According to this view, humans are born with a built-in capacity for language—a universal framework that underlies all linguistic systems. While languages may differ on the surface, they share deep structural similarities rooted in the human mind.

For example, all languages encode relationships between subjects and predicates, suggesting a common cognitive architecture.

From this perspective, thought precedes language. Language does not define how we think; rather, it expresses thoughts that already exist within a universal mental framework.

3. Evidence and Counterarguments

The debate between these perspectives has been tested through numerous experiments and interdisciplinary research.

Supporters of linguistic relativity often point to color perception studies. In some languages, blue and green are described with the same word. Speakers of such languages have been shown to distinguish these colors less quickly, suggesting that linguistic categories influence perception.

On the other hand, proponents of universal grammar highlight that infants—before fully acquiring language—can already understand complex concepts. Additionally, people from different linguistic backgrounds often solve logical problems in similar ways, implying that thought can operate independently of language.

Modern neuroscience adds further complexity. Brain imaging studies reveal that language-processing areas and reasoning areas can function separately, yet linguistic structures still appear to influence attention, memory, and categorization.

4. Modern Implications: Education, AI, and Multicultural Societies

This debate is not merely theoretical—it has profound real-world implications.

In education, if language shapes thought, then learning a new language may open entirely new ways of perceiving the world. Language learning becomes a process of cognitive transformation.

If thought shapes language, however, language learning is more about expressing pre-existing cognitive structures in different forms.

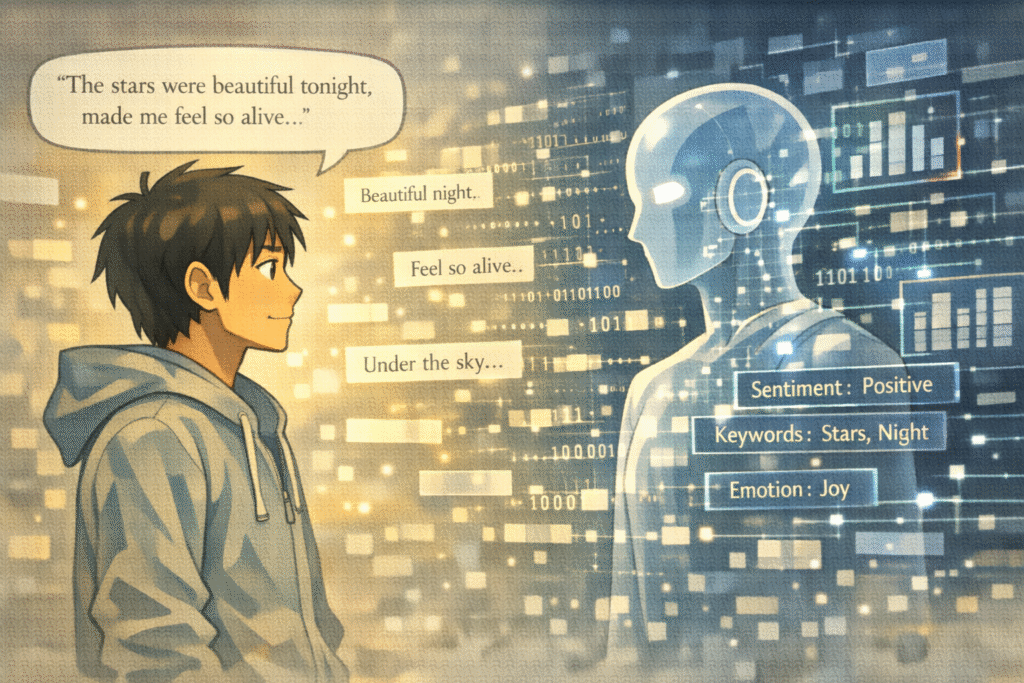

The debate is also central to artificial intelligence. Should AI treat language as data to process, or as a reflection of deeper cognitive structures? The answer influences how we design systems capable of “thinking” like humans.

In multicultural societies, this issue affects how we understand translation, communication, and cultural differences. Are misunderstandings rooted in language, or in deeper cognitive frameworks?

Conclusion: Judgment Deferred

It remains difficult to declare a clear winner in this debate.

Language and thought appear to exist in a dynamic relationship—each shaping and reshaping the other. Language can guide perception, while thought can generate and transform language.

Perhaps the real question is not which comes first, but how deeply they are intertwined.

Are we prisoners of the languages we speak, or are we free thinkers who merely wear language as a tool?

The answer may not lie in theory alone, but in how each of us experiences the world through both thought and language.

💬 A Question for Readers

When you learn a new language, do you feel that your way of thinking changes—

or are you simply expressing the same thoughts differently?

Related Reading

The question of who defines human standards is further examined in Can Humans Be the Moral Standard?, where the assumption that human judgment is the ultimate reference point is critically challenged in the context of evolving technological systems.

From a broader perspective on human identity and transformation, the limits of what it means to remain human are explored in Can Technology Surpass Humanity?, which reflects on how technological advancement may reshape not only our abilities, but the very standards by which we define ourselves.

References

- Whorf, B. L. (1956). Language, Thought, and Reality: Selected Writings. Cambridge, MA: MIT Press.

This work presents one of the most influential formulations of the Sapir–Whorf hypothesis, illustrating how linguistic structures shape patterns of perception and cognition. It provides essential philosophical and anthropological foundations for understanding linguistic relativity and its implications for how humans interpret reality.

- Sapir, E. (1921). Language: An Introduction to the Study of Speech. New York: Harcourt, Brace & Company.

Sapir’s foundational text explores the deep connections between language, culture, and thought, emphasizing that language is not merely a communication tool but a framework shaping worldview. It offers a classical perspective on how linguistic systems influence human cognition and social understanding.

- Chomsky, N. (1965). Aspects of the Theory of Syntax. Cambridge, MA: MIT Press.

Chomsky introduces the theory of universal grammar, arguing that human language is grounded in innate cognitive structures shared across all individuals. This work provides a central argument for the idea that thought precedes language and that linguistic diversity emerges from a common mental framework.

- Vygotsky, L. S. (1986). Thought and Language. Cambridge, MA: MIT Press.

Vygotsky examines the dynamic interaction between language and thought within a sociocultural context, particularly in child development. His work bridges the gap between the two opposing theories by demonstrating how language both shapes and is shaped by cognitive processes.

- Pinker, S. (1994). The Language Instinct: How the Mind Creates Language. New York: William Morrow and Company.

Pinker argues that language is an innate human capacity shaped by evolutionary processes, supporting the view that cognition plays a primary role in forming language. The book combines insights from psychology, linguistics, and biology to explain how language emerges from the human mind.