AI now creates a “perfect face” that circulates endlessly across digital platforms.

Smooth skin, flawless symmetry, and ideal proportions dominate our screens.

These images are not just reflections of beauty — they are increasingly becoming its definition.

But as these faces grow more similar, a deeper question emerges:

What happens to human uniqueness when technology begins to define beauty itself?

1. The Perfect Face, Yet Strangely Unfamiliar

Scrolling through social media today reveals a striking pattern.

Faces appear polished, balanced, and aesthetically consistent — almost too consistent.

Many of these images are not photographs of real individuals,

but AI-generated outputs trained on vast datasets.

AI is no longer just reproducing beauty.

It is generating a template of what a “beautiful human” should look like.

What was once shaped by culture, individuality, and lived experience

is now increasingly guided by algorithmic prediction.

2. Development — How AI Learns and Standardizes Beauty

AI systems learn from existing data,

but that data reflects dominant cultural and social biases.

Common patterns include:

- Westernized facial proportions

- Youthful and symmetrical features

- Specific skin tones and facial structures

- Gendered aesthetic expectations

Through repetition, these patterns become reinforced

and presented as if they were universal standards.

The Consequences of Algorithmic Beauty

AI-driven beauty standards do not remain neutral.

They actively reshape perception in several ways:

• Homogenization of appearance

Different faces gradually converge into a single optimized form.

• Amplification of bias

AI does not eliminate prejudice — it encodes and scales it.

• Evolution of lookism

Appearance-based judgment becomes more subtle, yet more powerful.

In this process, individuality is reduced to what the system defines as “efficient beauty.”

3. Philosophical Reflection — Who Has the Authority to Define Beauty?

Historically, beauty has never been absolute.

It has evolved through culture, history, and human interpretation.

AI, however, simplifies beauty into a problem of calculation:

- Pattern recognition

- Predictability

- Optimization

This raises a critical question:

Does AI expand our perception, or does it confine it within a standardized model?

Human creativity has always thrived on difference —

on imperfection, variation, and unpredictability.

If AI begins to eliminate these elements as “noise,”

we are not just facing a shift in aesthetics,

but a transformation in how we understand identity itself.

4. The Risk — When Diversity Becomes “Inefficient”

When beauty is defined through optimization,

diversity may be perceived as deviation.

In such a system:

- Uniqueness becomes irregularity

- Difference becomes inefficiency

- Identity becomes standardized

This is no longer just about appearance.

It becomes an ontological issue —

a question about what it means to be human.

Conclusion — Choosing Humanity Over Perfection

The “perfect face” generated by AI may appear convincing,

but it lacks something essential.

It lacks:

- Emotional depth

- Lived experience

- Imperfect expression

True human beauty exists not in symmetry,

but in variation.

Wrinkles, asymmetry, age, and texture

are not flaws — they are narratives.

To live meaningfully in the age of AI,

we must do more than consume algorithmic outputs.

We must question them.

We must interpret them.

And when necessary, resist them.

Ethics in the age of AI does not begin with perfection.

It begins with the recognition of difference.

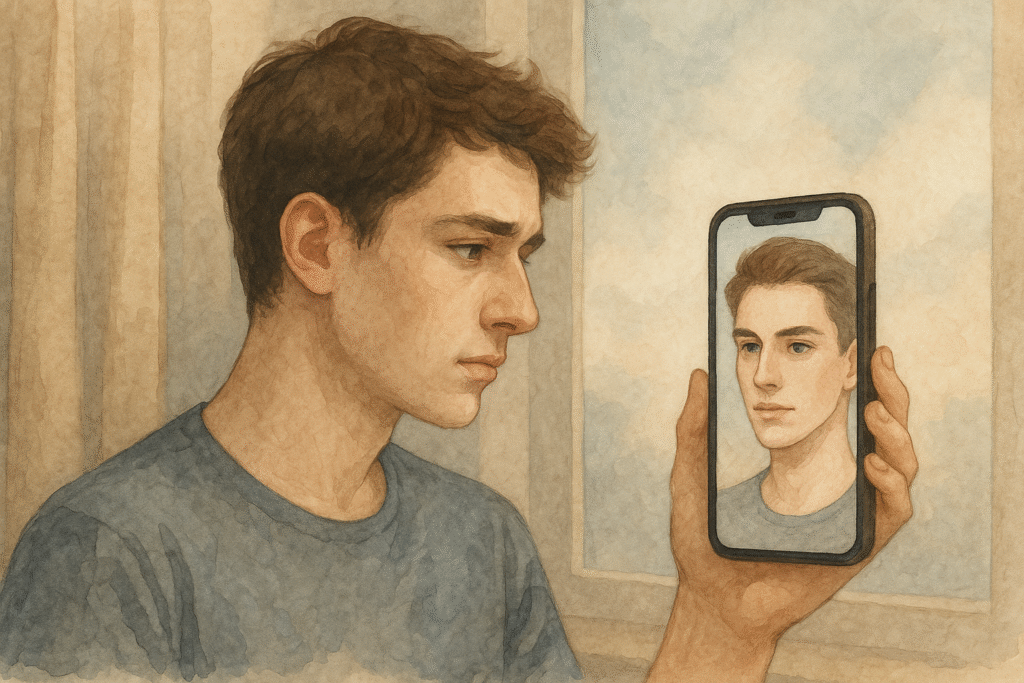

A Question for the Reader

Have you ever compared your appearance

to a filtered or AI-generated image —

and felt something was missing?

If so,

what does that reveal about how technology

is reshaping our perception of beauty and self-worth?

Related Reading

The question of who defines human standards is further examined in Can Humans Be the Moral Standard?, where the assumption that human judgment is the ultimate reference point is critically challenged in the context of evolving technological systems.

From a broader perspective on human identity and transformation, the limits of what it means to remain human are explored in Can Technology Surpass Humanity?, which reflects on how technological advancement may reshape not only our abilities, but the very standards by which we define ourselves.

References

1. Wolf, N. (1991). The Beauty Myth. New York: HarperCollins.

→ This book critiques how beauty standards operate as a form of social control, arguing that modern societies weaponize appearance norms to reinforce structural power. It remains foundational for discussing lookism in relation to gender politics and cultural systems.

2. Davis, K. (2017). The Making of Our Bodies, Ourselves. London: Routledge.

→ Davis examines how cultural norms around the body are constructed and reproduced, showing the deep connection between identity, embodiment, and technological mediation. This framework helps contextualize AI’s growing influence on self-image.

3. Benjamin, R. (2019). Race After Technology. Cambridge: Polity Press.

→ Benjamin analyzes how algorithmic systems encode racial bias. Her insights illuminate why AI-generated beauty standards often reflect and amplify existing inequalities, rather than neutralizing them.

4. Bourdieu, P. (1984). Distinction: A Social Critique of the Judgement of Taste. Cambridge: Harvard University Press.

→ Bourdieu demonstrates that taste is not neutral but shaped by social class structures. Applying his theory reveals how AI beauty standards reinforce — rather than transcend — cultural hierarchies.

5. Rini, R. (2020). Deepfakes and the Infocalypse. Cambridge, MA: MIT Press.

→ Rini explores the destabilization of visual truth in the digital era. Her analysis helps explain how AI-generated faces complicate authenticity, trust, and identity in contemporary media environments.