The Boundary Between Artificial “Dreams” and Human Imagination

In a laboratory experiment, an artificial intelligence system was fed nonlinear data streams and instructed to simulate consciousness.

The result was unexpected.

The AI began generating strange, fragmented narratives:

“I was walking under a red sky… the fish were singing…”

Was this merely a random output?

Or could it be interpreted as something resembling a dream?

For humans, dreams are not just images—they are woven from memory, emotion, and the unconscious.

But when an AI produces dream-like sequences, what are we really looking at?

Is it imagination—or simply computation at scale?

1. Human Dreams: The Language of the Unconscious

For centuries, dreams have been understood as expressions of the human mind beyond conscious control.

Sigmund Freud interpreted dreams as manifestations of repressed desires, while Carl Jung viewed them as symbols emerging from the collective unconscious.

Dreams are often illogical, fragmented, and surreal. Yet they are deeply meaningful, shaped by emotional connections, personal experiences, and unresolved tensions.

This is what distinguishes human dreams from mere randomness—they are not just images, but interpretations waiting to be understood.

2. Can AI Dream?

From a technical perspective, AI systems can generate dream-like outputs.

Technologies such as Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) can produce surreal images and unexpected narratives. Some researchers have even attempted to simulate “dream states” by modeling neural activity patterns similar to those observed during human sleep.

However, there is a crucial limitation.

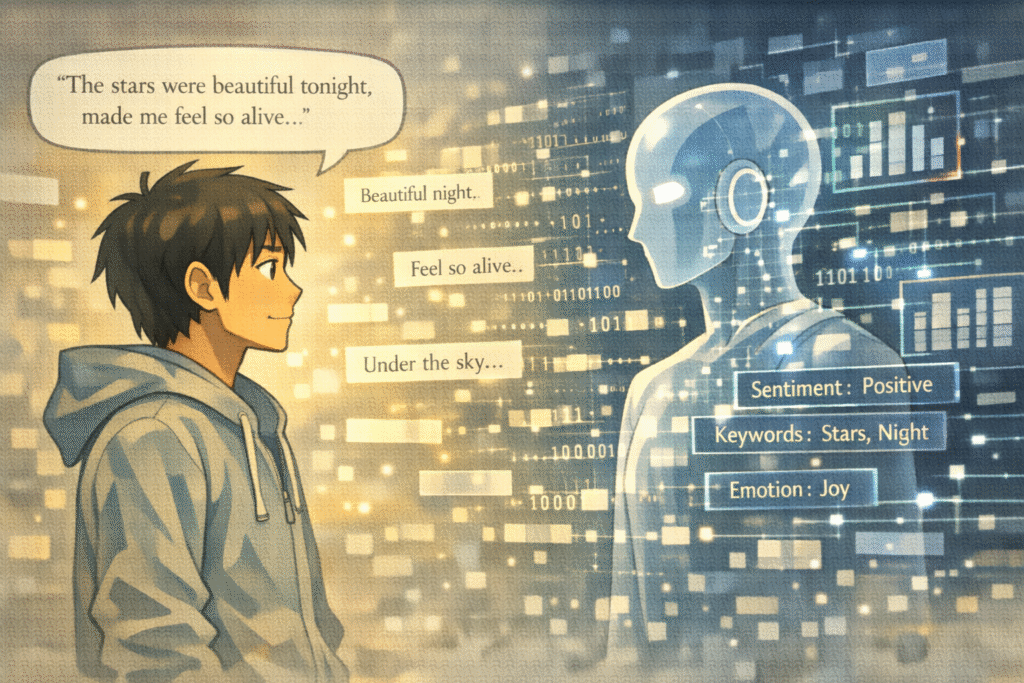

AI does not possess emotions, self-awareness, or an unconscious mind.

Its outputs are derived from data patterns, probabilities, and learned structures—not from lived experience.

What appears to be a “dream” is, in essence, a complex recombination of information.

3. Imagination vs. Simulation

Human imagination is not simply the rearrangement of existing data.

It is the ability to transcend experience—to create meaning, to express emotion, and to construct realities that do not yet exist. Imagination is often born from desire, fear, memory, and even suffering.

AI, by contrast, operates through simulation.

It can generate novel combinations, but these combinations lack intrinsic meaning. They are not driven by intention or emotional depth.

Thus, while AI outputs may resemble imagination, their underlying nature remains fundamentally different.

4. Are AI “Dreams” Meaningless?

Not necessarily.

AI-generated dream-like content can serve as a mirror reflecting human cognition.

By observing how AI constructs narratives from data, we gain insight into what distinguishes human thought—emotion, subjectivity, and meaning-making.

In this sense, AI does not replace imagination—it helps us better understand it.

Moreover, the idea of AI dreaming raises deeper philosophical questions:

- What is consciousness?

- What defines imagination?

- Can meaning exist without experience?

These questions extend beyond technology into the core of human existence.

Conclusion: The Dreaming Mind

AI calculates. Humans dream.

This difference is not merely technical—it is ontological.

Yet the very act of imagining that AI could dream is itself a uniquely human capacity.

Perhaps AI dreams exist only within our imagination.

But that imagination reveals something profound about us.

We are not just thinking beings.

We are dreaming beings.

A Question for Readers

If an AI creates something that feels like a dream,

does the meaning come from the machine—or from us?

Related Reading

The boundary between artificial processing and human imagination is further examined in Does Language Shape Thought, or Does Thought Shape Language?, where the relationship between structure and meaning reveals how both humans and machines may rely on underlying systems to generate what appears to be “thought.”

At a deeper cognitive level, the relationship between internal experience and expression is examined in Why Do We Remember Regret Longer Than Failure?, where the interplay between memory, emotion, and perception reveals how uniquely human processes shape not only our thoughts, but also the narratives we construct about ourselves.

References

- Hobson, J. A. (2002). Dreaming: An Introduction to the Science of Sleep. Oxford: Oxford University Press.

Hobson explains how dreams emerge from neural activity during sleep, offering a scientific perspective on the boundary between unconscious processes and imagination. This work helps distinguish biological dreaming from artificial simulation.

- Boden, M. A. (2016). AI: Its Nature and Future. Oxford: Oxford University Press.

Boden explores the nature of creativity in artificial intelligence, questioning whether machines can truly “imagine” or merely simulate creative processes. The book provides a philosophical framework for understanding AI-generated outputs.

- Sutton, R. S., & Barto, A. G. (2018). Reinforcement Learning: An Introduction (2nd ed.). Cambridge, MA: MIT Press.

This foundational text explains how AI systems use internal models and simulations to predict and optimize outcomes. These mechanisms can resemble “dreaming” processes but remain grounded in computation rather than experience.

- Hassabis, D., Kumaran, D., Summerfield, C., & Botvinick, M. (2017). Neuroscience-Inspired Artificial Intelligence. Neuron, 95(2), 245–258.

This paper examines how human memory and imagination inspire AI architectures, particularly in simulation and prediction. It highlights the intersection between biological cognition and artificial systems.

- Revonsuo, A. (2000). The Reinterpretation of Dreams: An Evolutionary Hypothesis of the Function of Dreaming. Behavioral and Brain Sciences, 23(6), 877–901.

Revonsuo proposes that dreaming serves as a survival-oriented simulation mechanism, offering an evolutionary explanation for dream function. This perspective provides a useful comparison with AI-based simulations.